The Rise of Generative AI

Generative AI represents one of the most significant breakthroughs in artificial intelligence. Unlike traditional AI systems that classify, predict, or analyze existing data, generative AI creates entirely new content — text, images, music, code, and video — that did not previously exist.

Since the launch of ChatGPT in late 2022, generative AI has moved from research labs into everyday tools used by millions of people. By 2026, generative AI capabilities are embedded in productivity suites, design tools, development environments, and business applications worldwide.

How Generative AI Works

The Foundation: Neural Networks

Generative AI is built on deep neural networks — computational models loosely inspired by the human brain. These networks consist of layers of interconnected nodes that process information and learn patterns from vast amounts of training data.

Training Process

The training process for generative AI involves several key phases:

- Data collection: Massive datasets are gathered — for language models, this means trillions of words from books, websites, and other text sources.

- Pre-training: The model learns patterns, relationships, and structures in the data by predicting what comes next in a sequence.

- Fine-tuning: The model is refined on specific tasks or domains to improve performance for particular use cases.

- Alignment: Human feedback helps adjust the model's behavior to be more helpful, harmless, and honest.

Key Architectures

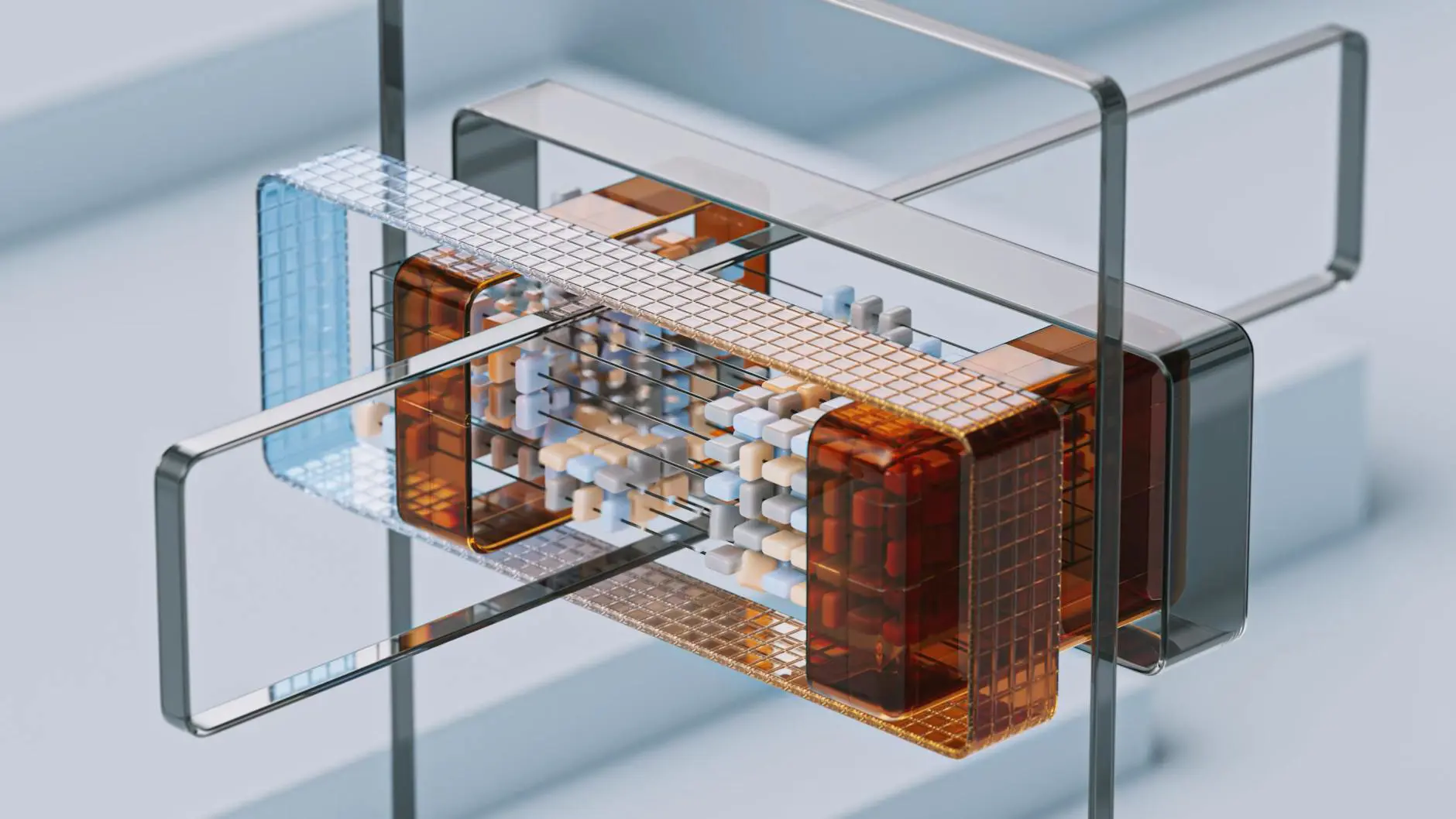

Several neural network architectures power different types of generative AI:

- Transformers: The dominant architecture for language models. Uses self-attention mechanisms to understand relationships between all parts of an input simultaneously.

- Diffusion models: Power image generation tools by learning to gradually remove noise from random data until a coherent image emerges.

- GANs (Generative Adversarial Networks): Two neural networks compete — a generator creates content while a discriminator evaluates its quality — pushing both to improve.

- VAEs (Variational Autoencoders): Learn compressed representations of data and generate new samples from that compressed space.

Types of Generative AI

Text Generation

Large language models (LLMs) like GPT, Claude, Gemini, and Llama generate human-quality text. Applications include:

- Content writing and editing

- Code generation and debugging

- Translation and summarization

- Question answering and research assistance

- Creative writing and brainstorming

Image Generation

Text-to-image models like DALL-E, Midjourney, and Stable Diffusion create images from natural language descriptions. These tools are used for:

- Marketing visuals and social media content

- Product concept art and prototyping

- Illustration and graphic design

- Architecture and interior design visualization

Audio and Music Generation

AI models can now generate realistic speech, sound effects, and original music compositions. Applications range from podcast production to background music for videos.

Video Generation

Video generation AI, while still maturing, can create short clips from text prompts, animate still images, and assist with video editing tasks.

Code Generation

AI coding assistants generate functional code from natural language descriptions, translate between programming languages, write tests, and explain complex codebases.

Understanding Model Parameters and Scale

The capability of a generative model often correlates with its size, measured in parameters (the adjustable values the model learned during training):

| Model Size | Parameters | Typical Capabilities |

|---|---|---|

| Small | 1-7 billion | Basic text generation, simple tasks |

| Medium | 7-70 billion | Competent writing, code, analysis |

| Large | 70+ billion | Complex reasoning, nuanced content, multi-step tasks |

Larger models generally produce higher-quality output but require more computational resources to train and run.

Practical Applications for Businesses

Generative AI offers concrete value across business functions:

- Marketing: Generate ad copy, social media posts, and email campaigns at scale.

- Product development: Rapidly prototype designs and generate product descriptions.

- Customer support: Power intelligent chatbots that understand context and provide accurate answers.

- Software development: Accelerate coding, automate testing, and generate documentation.

- Research: Summarize documents, extract insights, and identify trends in large datasets.

Limitations and Challenges

Despite its power, generative AI has important limitations:

- Hallucinations: Models can generate plausible-sounding but factually incorrect information.

- Bias: Models reflect biases present in their training data.

- Copyright concerns: Questions remain about the intellectual property implications of AI-generated content.

- Quality consistency: Output quality can vary, requiring human review and editing.

- Cost: Running large models demands significant computational resources.

The Future of Generative AI

The field is evolving rapidly. Emerging trends include multimodal models that seamlessly handle text, images, and audio together; smaller, more efficient models that run on edge devices; and AI agents that combine generative capabilities with the ability to take actions in the real world. Organizations like Ekolsoft are helping businesses navigate this landscape by building custom generative AI solutions that address specific industry needs.

Generative AI does not replace creativity — it amplifies it. The most impactful results come from humans who learn to collaborate effectively with these powerful tools.