Table of Contents

- 1. Introduction: Why Content Verification Matters

- 2. Detecting AI-Generated Text

- 3. Identifying AI-Generated Images

- 4. Deepfake Video Detection

- 5. AI Voice Cloning and Detection

- 6. AI Content Detection Tools

- 7. Watermarking Technologies

- 8. C2PA Standard and Content Provenance

- 9. Practical Tips and Checklist

- 10. Looking Ahead

- 11. Frequently Asked Questions (FAQ)

Introduction: Why Content Verification Matters

Artificial intelligence technologies are becoming increasingly sophisticated, capable of producing text, images, videos, and audio that are nearly indistinguishable from human-created content. Tools like ChatGPT, Midjourney, DALL-E, Sora, and ElevenLabs have made it possible for anyone to generate professional-quality content in minutes. However, this convenience brings significant risks.

Disinformation campaigns, fake news, deepfake videos, and AI-powered scams have become one of the most pressing threats to digital trust. According to research conducted in 2025, an estimated 15-20% of online content is generated by artificial intelligence, and this percentage is growing rapidly.

In this comprehensive guide, we will explore methods for detecting every type of AI-generated content, the tools available to you, and practical tips for staying informed and protected.

Detecting AI-Generated Text

While AI text generators (LLMs) can now produce fluent and coherent writing, a careful reader can still identify certain characteristic patterns. Here are the common indicators of AI-written text:

Linguistic Clues

- Excessively polished language: AI text is typically free of grammatical errors and appears overly "smooth." Human writers naturally produce minor inconsistencies and imperfections.

- Repetitive patterns: Overuse of transitional phrases like "In conclusion," "It is important to note," "Furthermore," and "In this context" is a telltale sign.

- Average complexity: AI text tends to maintain a consistently moderate complexity level, neither too simple nor too complex, across the entire piece.

- Lack of emotional depth: Personal anecdotes, original metaphors, and emotional nuances are generally weak or absent in AI-generated content.

- Vague references: AI may avoid citing specific sources or may "hallucinate" references that do not actually exist.

Statistical Analysis Methods

The primary statistical methods used in AI text detection include:

| Method | Description | Accuracy |

|---|---|---|

| Perplexity Analysis | Measures how "surprising" the text is. AI text exhibits low perplexity scores. | 70-80% |

| Burstiness Measurement | Analyzes variation in sentence length and structure. AI text is more homogeneous. | 65-75% |

| Token Probability Distribution | Checks alignment of word choices with model probability distributions. | 75-85% |

| Stylometric Analysis | Searches for individual writing fingerprints in style patterns. | 60-70% |

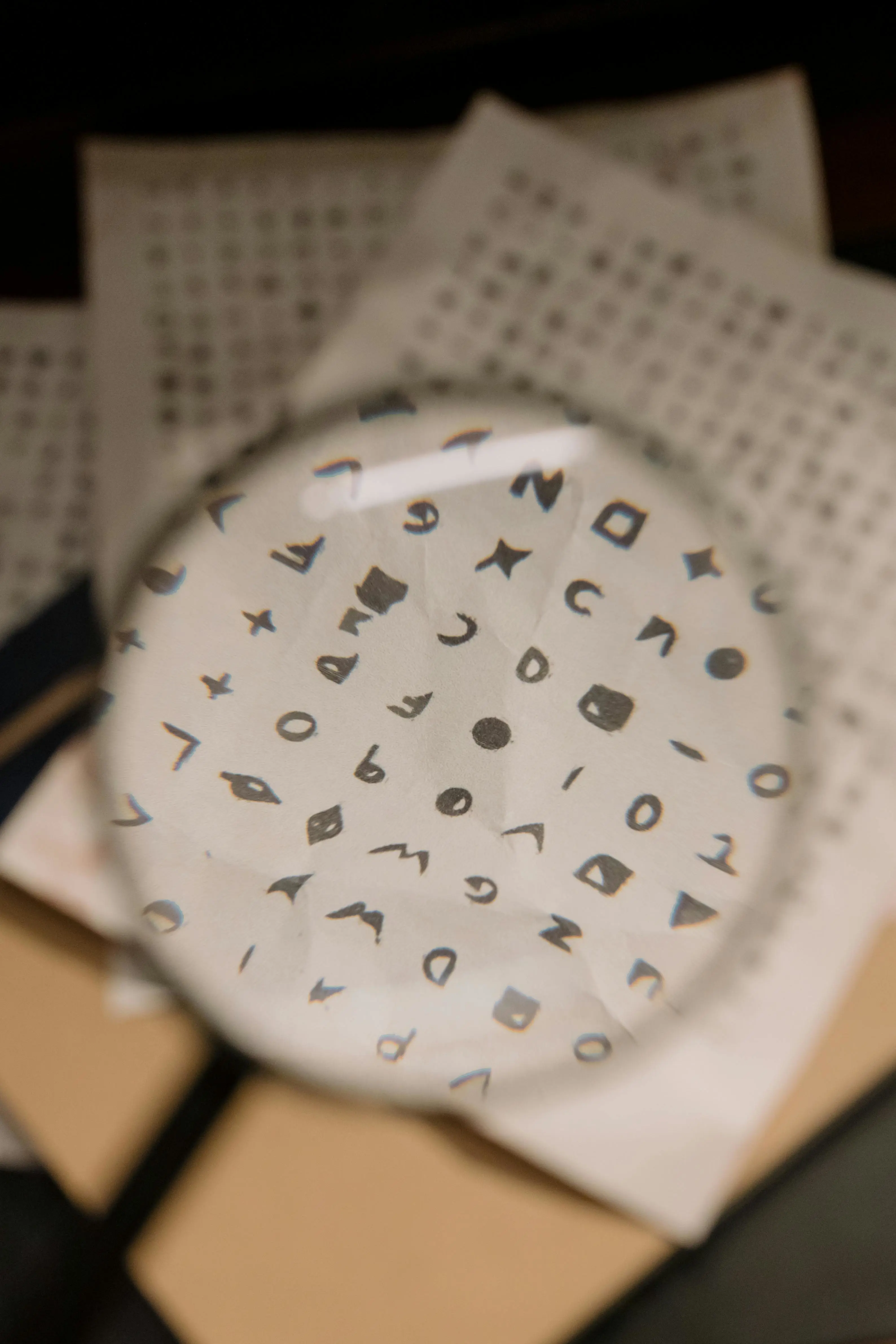

Identifying AI-Generated Images

AI image generators (Midjourney, DALL-E 3, Stable Diffusion, Flux) can produce incredibly realistic visuals. However, careful examination can still reveal certain anomalies.

Visual Inspection Tips

- Hands and fingers: AI images can still struggle with human hands — extra or missing fingers, unnatural positions, and odd joint angles remain common issues.

- Text and lettering: Signs, labels, and text within AI-generated images often contain nonsensical or garbled characters.

- Symmetry issues: Earrings, glasses, ears, and other elements that should be symmetrical may show inconsistencies.

- Background inconsistencies: Background details may blur, objects may warp, or there may be illogical transitions between elements.

- Texture anomalies: Hair, fabric, and natural textures can display repetitive or melting patterns when closely examined.

- Lighting and shadow mismatches: Different objects within the same scene may show inconsistent lighting directions and shadow angles.

Technical Analysis Methods

When you encounter a visually suspicious piece of content, you can apply these technical methods:

- EXIF data check: Authentic photographs contain camera information, shooting date, GPS coordinates, and other metadata. AI-generated images lack this data.

- Reverse image search: Use Google Lens or TinEye to search for the original source. AI-generated images typically cannot be found anywhere else.

- Frequency analysis: Using Fourier transforms to examine the frequency spectrum of the image. AI images show characteristic frequency patterns.

- Pixel-level inspection: When zoomed in, AI images display smooth gradients rather than typical sensor noise patterns.

Deepfake Video Detection

Deepfake videos are AI technologies that swap one person's face with another's or create entirely synthetic videos. Tools like Sora, Runway, Kling, and HeyGen have revolutionized this field, making deepfake creation more accessible than ever.

Signs of Deepfake Videos

- Unnatural blinking: In deepfake videos, the frequency and duration of blinking often differ from natural patterns.

- Face boundary blurring: Blurring or flickering may be observed at the hairline, jawline, and neck transitions.

- Lip-audio desynchronization: During speech, lip movements may not be perfectly synchronized with the audio.

- Light response: Light reflections may not change naturally as the face moves, particularly the eye glare which may remain static.

- Inter-frame inconsistencies: When slowed down, sudden face changes between frames can become visible.

- Teeth and tongue issues: Mouth interior details are typically blurry or inconsistent in deepfakes.

AI Voice Cloning and Detection

AI voice cloning technologies can replicate a person's voice from just a few seconds of audio sample. Platforms like ElevenLabs, Resemble AI, and Coqui have made this technology widely accessible. This poses a significant risk, particularly for voice phishing (vishing) attacks.

Tips for Detecting AI-Generated Audio

- Monotone intonation: AI voices may still struggle with natural prosody — the rhythmic patterns of stress and intonation in natural speech.

- Lack of breathing: In natural speech, breathing sounds are audible between sentences and phrases. AI-generated audio may lack these or produce artificial-sounding breaths.

- Emotional expression: Sudden emotional transitions such as surprise, anger, or sadness may not sound natural in AI-generated voices.

- Background sounds: A completely clean audio recording with zero background noise can be suspicious, as real recordings nearly always contain ambient sound.

- Spectrogram analysis: Examining the spectrogram of an audio file can reveal characteristic artifacts present in AI-generated speech.

AI Content Detection Tools

There are numerous tools available on the market designed to detect AI-generated content. Here is a comparison of the most widely used detection tools:

Text Detection Tools

| Tool | Type | Features | Pricing |

|---|---|---|---|

| GPTZero | Text | Perplexity and burstiness analysis, sentence-level detection | Free / Pro |

| Originality.ai | Text | High accuracy, plagiarism check integration | Paid |

| Copyleaks | Text | Multi-language support, API access | Free / Pro |

| Sapling AI Detector | Text | Fast analysis, browser extension | Free |

Image and Video Detection Tools

| Tool | Type | Features |

|---|---|---|

| Hive Moderation | Image + Video | Real-time AI content detection, API support |

| Sensity AI | Deepfake Video | Face swap and fully synthetic video detection |

| FotoForensics | Image | ELA analysis, metadata inspection |

| Illuminarty | Image | AI image generator model identification |

Watermarking Technologies

One of the most promising approaches in AI content detection is watermarking technology. These technologies embed invisible marks into AI-generated content, making the content's origin verifiable.

Text Watermarking

Text watermarking embeds a statistical "fingerprint" into text produced by AI models. In this method, the model applies systematic biases in certain word choices. For example:

Watermarking Principle:

- During token generation, the vocabulary is split into "green" and "red" lists at each step

- The model is guided to prefer words from the green list

- This preference is imperceptible to humans but statistically detectable

- In sufficiently long text, the watermark can be reliably verified

Google DeepMind's SynthID technology and OpenAI's watermarking system are pioneering efforts in this area. However, text watermarking has limitations: paraphrasing or translating the text can degrade or remove the watermark.

Image Watermarking

Image watermarking is a more robust technology. Systems like SynthID make pixel-level changes to images that are invisible to the human eye but create a digital signature. This signature:

- Is resistant to cropping, resizing, and compression

- Can survive even when screenshots are taken

- Is invisible to human eyes but algorithmically detectable

- Does not negatively affect image quality

Audio and Video Watermarking

Audio watermarking operates by making frequency changes below the audible threshold. Video watermarking can apply separate watermarks to both visual and audio channels. Companies like Meta, Google, and Microsoft are actively working in this space to develop robust watermarking solutions for all media types.

C2PA Standard and Content Provenance

C2PA (Coalition for Content Provenance and Authenticity) is an open standard designed to verify the origin of digital content. Backed by major companies including Adobe, Microsoft, Google, Intel, and the BBC, this standard adds a "nutrition label" style structure to digital content.

How C2PA Works

The C2PA standard cryptographically secures the following information about content:

- Content Credentials: Information about who created the content, when, and with which tools

- Editing history: A record of all modifications made to the content

- AI usage declaration: Whether AI was used in creating the content

- Digital signature: A cryptographic signature guaranteeing that none of this information has been tampered with

The greatest advantage of C2PA is that it offers a proactive rather than reactive approach to AI detection. Instead of guessing whether content was AI-generated, it makes it possible to directly verify the source. This is the most powerful solution with the potential to break the arms-race cycle between AI generators and detectors.

Practical Tips and Checklist

Use the following checklist when evaluating suspicious content you encounter online:

General Verification Checklist

- Verify the source: Does the content come from a trusted source? Check the publisher's history and reputation.

- Cross-reference: Try to verify the same information through multiple independent, reliable sources.

- Examine metadata: Check EXIF data for images, creation dates for documents, and any available provenance information.

- Perform reverse searches: Use Google Lens or TinEye to find the original source of images.

- Use detection tools: Run content through GPTZero, Hive Moderation, or similar AI detection tools.

- Check C2PA information: Look for Content Credentials data attached to the content.

- Apply common sense: Content that seems "too good to be true" usually is not true.

Quick Checks by Content Type

| Content Type | Initial Checks | Advanced Analysis |

|---|---|---|

| Text | Source verification, writing style analysis | Scan with GPTZero or similar tools |

| Image | Hand/finger check, text check, EXIF data | Hive Moderation, reverse image search |

| Video | Face boundaries, blinking, lip sync | Sensity AI, frame-by-frame analysis |

| Audio | Intonation naturalness, breathing sounds | Spectrogram analysis, AI voice detectors |

Looking Ahead

The field of AI content detection is evolving rapidly. Here are the key developments expected in the coming years:

- Legal regulations: The EU AI Act and similar legislation are beginning to mandate labeling of AI-generated content across jurisdictions.

- Platform policies: Social media platforms will continue to expand their AI content labeling systems and transparency requirements.

- C2PA adoption: The Content Credentials standard is expected to be adopted across a broad ecosystem, from cameras to social media platforms.

- Education and awareness: Digital literacy curricula will increasingly incorporate AI content detection topics at all levels of education.

- Real-time detection: Browser extensions and mobile applications will offer instant AI content alerts while users consume content online.

Ultimately, AI content detection is an arms race, and technical solutions alone will not be sufficient. The combination of critical thinking skills, source verification habits, and technological tools will empower us to navigate this challenge successfully.

Frequently Asked Questions (FAQ)

Can AI-written text be detected with 100% accuracy?

No, with current technology it is not possible to detect AI text with 100% accuracy. Even the best tools achieve approximately 85-95% accuracy rates and can produce false positive results. This is why using multiple tools in combination and evaluating results within context is essential for reliable detection.

What free tools can I use to detect deepfake videos?

Microsoft Video Authenticator, the InVID/WeVerify browser extension, and FotoForensics are free tools that can assist with deepfake detection. Additionally, you can manually check by slowing down the video and examining face boundaries, blinking patterns, and lip-audio synchronization frame by frame.

Is the C2PA standard mandatory for all AI content?

Currently, C2PA is a voluntary standard. However, under the EU AI Act, obligations to label AI-generated content are being introduced. Companies like Adobe, Google, and Microsoft have already integrated C2PA into their products. Broader legal mandates are expected in the future as the technology matures and adoption increases.

How can I protect myself from AI voice cloning scams?

Establish a family "safe word" with your loved ones that only they would know. When receiving phone calls requesting money transfers, verify the caller by calling them back on a known number. Be cautious of calls creating urgency pressure and avoid making emotional decisions under pressure. Consider limiting your voice content shared on social media platforms.

Will the flaws in AI-generated images be fixed over time?

Yes, AI image generators are continuously improving, and previously obvious errors (such as finger count issues and text rendering) are gradually decreasing. This is precisely why relying solely on visual anomalies is becoming insufficient. Metadata verification, reverse image searching, and C2PA validation are becoming increasingly important as complementary detection methods.

Can watermarking technology be removed?

Text watermarking can be partially degraded through paraphrasing or translation. Image watermarking is considerably more robust, providing protection against cropping, resizing, and compression operations. However, no watermarking system is entirely removal-proof. This is why watermarking works most effectively when used in conjunction with provenance systems like C2PA to provide multiple layers of verification.