Two of the most frequently confused concepts in artificial intelligence are Machine Learning (ML) and Deep Learning (DL). While they are closely related, they differ fundamentally in their approaches, capabilities, and ideal applications. In this comprehensive guide, we break down both fields in detail, compare them side by side, and help you understand when to use which approach.

1. What Is Machine Learning?

Machine Learning (ML) is a subset of artificial intelligence that enables computers to learn from data without being explicitly programmed. Coined by Arthur Samuel in 1959, it refers to algorithms that improve their performance through experience (data).

Core ML Pipeline

ML uses mathematical and statistical methods to extract patterns from data. A typical ML workflow includes:

- Data Collection: Gathering relevant and sufficient data

- Preprocessing: Cleaning, transforming, and preparing the data

- Feature Engineering: Expert-driven selection of important features

- Model Training: Algorithm learns from the data

- Evaluation: Performance is measured with test data

- Deployment: Successful model goes to production

Key Insight

The most critical step in ML is feature engineering -- domain experts extract meaningful features from raw data that the model can learn from. This is one of the fundamental differences from deep learning, which performs this step automatically.

2. Types of Machine Learning

Supervised Learning

Works with labeled data. The model learns from input-output pairs to make predictions on new inputs. Think of it as learning with a teacher providing correct answers.

Algorithms:

- Linear Regression, Logistic Regression

- Decision Trees, Random Forest

- Gradient Boosting (XGBoost, LightGBM, CatBoost)

- Support Vector Machines (SVM)

- K-Nearest Neighbors (KNN), Naive Bayes

Use Cases: Spam detection, credit scoring, disease diagnosis, price prediction, image classification

Unsupervised Learning

Works with unlabeled data. The model discovers hidden patterns, groups, and structures on its own. Like exploring and finding patterns without guidance.

Algorithms:

- K-Means Clustering, Hierarchical Clustering

- DBSCAN, Gaussian Mixture Models

- PCA, t-SNE, UMAP (dimensionality reduction)

- Association Rules (Apriori)

Use Cases: Customer segmentation, anomaly detection, recommendation engines, market basket analysis

Reinforcement Learning

An agent takes actions in an environment, receiving rewards or penalties. The goal is to maximize cumulative reward through trial and error.

Algorithms:

- Q-Learning, Deep Q-Network (DQN)

- Policy Gradient, Actor-Critic (PPO, A3C)

- Monte Carlo Tree Search (MCTS)

Use Cases: Game playing (AlphaGo), robotic control, autonomous vehicles, resource optimization

Machine Learning Types Hierarchy

┌──────────────────────────┐

│ MACHINE LEARNING (ML) │

└────────────┬─────────────┘

┌─────────────────┼─────────────────┐

▼ ▼ ▼

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ Supervised │ │ Unsupervised │ │Reinforcement │

│ Learning │ │ Learning │ │ Learning │

└──────────────┘ └──────────────┘ └──────────────┘

Labeled Data Unlabeled Data Reward/Penalty

3. What Is Deep Learning?

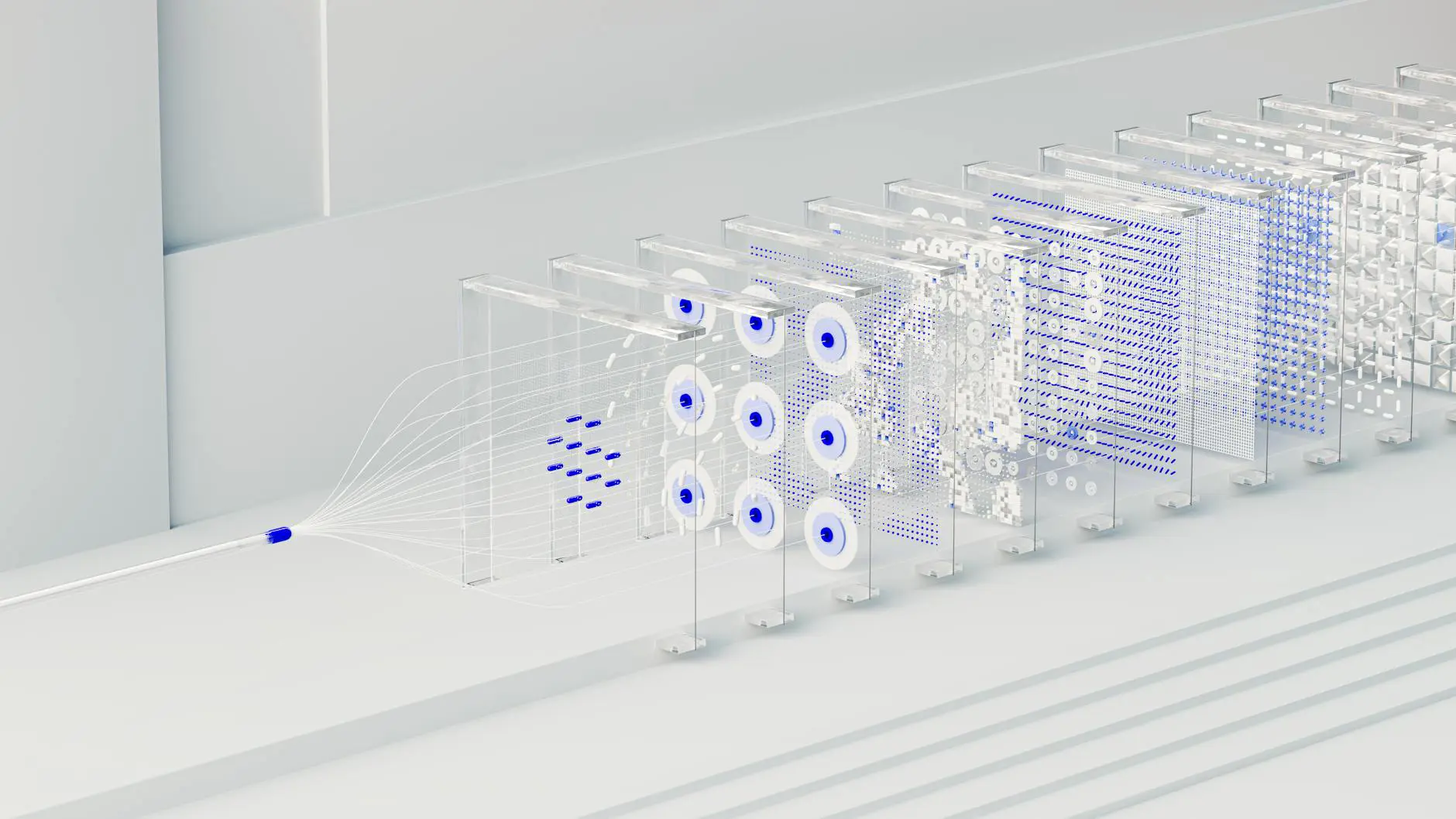

Deep Learning (DL) is a subset of machine learning that uses multi-layered artificial neural networks (deep neural networks) to learn from data. The word "deep" refers to the large number of hidden layers in the neural network.

Unlike traditional ML, deep learning can perform automatic feature extraction. It takes raw data (pixels, audio waves, text) and learns meaningful representations on its own. This minimizes human intervention and enables solving much more complex problems.

Neural Network Structure

| Layer | Description | Function |

|---|---|---|

| Input Layer | Receives raw data | Accepts pixels, words, audio signals |

| Hidden Layers | Feature extraction and transformation | Each layer learns increasingly abstract features |

| Output Layer | Produces results | Classification, regression, or generation outputs |

Warning

Deep learning models are often called "black boxes" because their decision-making processes are difficult to explain. This is a significant drawback in industries like healthcare and finance that require transparency. Explainable AI (XAI) is actively working to address this challenge.

4. Deep Learning Architectures

CNN (Convolutional Neural Network)

Designed for image processing. Convolution layers hierarchically learn features like edges, textures, and objects from images.

Key Models: LeNet, AlexNet, VGG, ResNet, Inception, EfficientNet

Applications: Image classification, object detection, facial recognition, medical imaging, autonomous driving

RNN (Recurrent Neural Network)

Designed for sequential data (time series, text, audio). Uses memory mechanisms to retain previous information for contextual predictions.

Variants: Vanilla RNN, LSTM (Long Short-Term Memory), GRU (Gated Recurrent Unit), Bidirectional RNN

Applications: Language modeling, machine translation, speech recognition, time series forecasting

Transformer

Introduced by Google in 2017, a revolutionary architecture. The Self-Attention mechanism learns relationships between all input elements in parallel, overcoming the sequential processing limitations of RNNs.

Key Models: BERT, GPT series, T5, Claude, LLaMA, PaLM, ViT (Vision Transformer)

Applications: NLP, large language models, image generation (DALL-E), multimodal AI, code generation

Evolution of Deep Learning Architectures

1998 - LeNet (CNN) ───────► Handwritten digit recognition

2012 - AlexNet (CNN) ──────► ImageNet revolution

2014 - GRU (RNN) ──────────► More efficient sequence processing

2015 - ResNet (CNN) ───────► 152-layer deep network

2017 - Transformer ────────► Attention Is All You Need

2018 - BERT ────────────────► NLP revolution

2020 - GPT-3 ───────────────► Age of large language models

2024+ - Multimodal AI ──────► Text + Image + Audio convergence

5. Key Differences: Machine Learning vs Deep Learning

5.1 Data Requirements

ML algorithms can produce effective results with hundreds or thousands of data points. Deep learning delivers its best performance with hundreds of thousands or millions of data points. With small datasets, DL models face significant overfitting risk.

5.2 Compute Power and Infrastructure

Traditional ML algorithms run efficiently on standard CPUs. Deep learning models require GPUs (Graphics Processing Units) or TPUs (Tensor Processing Units) for parallel matrix computations. This significantly increases infrastructure costs.

5.3 Interpretability

ML models like decision trees or linear regression can easily explain their decisions. Deep learning models, containing millions of parameters, make it extremely difficult to explain their reasoning. This is a critical constraint in finance, healthcare, and legal domains.

6. When to Use ML vs DL

Choose ML When

- Small/medium datasets (<10,000 records)

- Structured, tabular data

- Model explainability is critical

- Limited compute resources

- Rapid prototyping needed

- Domain expertise enables feature engineering

- Regulatory compliance required (GDPR, HIPAA)

Choose DL When

- Large amounts of data (>100,000 records)

- Unstructured data (images, audio, text)

- Complex patterns and relationships

- Sufficient GPU/TPU resources available

- Transfer learning is applicable

- Highest accuracy is the priority

- Automatic feature extraction desired

Pro Tip

In practice, the best strategy is to start with simpler ML models, establish a baseline, and then compare with deep learning. A well-tuned XGBoost model can sometimes outperform a complex DL model -- especially with structured data.

7. Real-World Applications

8. Algorithm Comparison

9. Training Process Differences

ML Training Pipeline

▼

▼

▼

▼

DL Training Pipeline

▼

▼

▼

▼

ML model training typically takes minutes to a few hours, while deep learning training can take days, weeks, or even months. Large language models (GPT-4, Claude) require millions of dollars in compute and months of training. However, transfer learning allows pre-trained models to be adapted for different tasks, dramatically reducing this time.

10. Hardware Requirements

Cost Warning

Before starting deep learning projects, carefully plan your cloud computing costs. GPU instances on AWS, Google Cloud, and Azure range from $1-$30+ per hour. Since training can take weeks, costs add up quickly. Use spot/preemptible instances to save 60-90%.

11. Career Paths

ML-Focused Career Paths

- Data Scientist: Data analysis, model development, business insights

- ML Engineer: Model deployment, MLOps, scaling

- Data Analyst: Statistical analysis, reporting

- BI Developer: Decision support systems

Skills: Python, R, SQL, Scikit-learn, Pandas, Statistics, Mathematics

DL-Focused Career Paths

- DL Research Scientist: New architectures and methods

- NLP Engineer: Language models, chatbots, translation

- Computer Vision Engineer: Image/video analysis

- AI/ML Architect: End-to-end AI system design

Skills: PyTorch, TensorFlow, CUDA, Linear Algebra, Calculus, Research

12. Learning Resources

Machine Learning Resources

- Coursera: Andrew Ng - ML Specialization

- Book: Hands-On ML with Scikit-Learn (Geron)

- Kaggle: Competitions and datasets

- Fast.ai: Practical ML for Coders

- Google: ML Crash Course

Deep Learning Resources

- Coursera: Deep Learning Specialization (Ng)

- Book: Deep Learning (Goodfellow et al.)

- Fast.ai: Practical Deep Learning

- PyTorch: Official Tutorials

- Hugging Face: NLP Course

13. Frequently Asked Questions

Q1: Is deep learning always better than machine learning?

Absolutely not. Deep learning excels with large, unstructured datasets, but small datasets, structured data, and scenarios requiring explainability often favor classical ML algorithms like XGBoost or Random Forest, which can be more practical and even more accurate.

Q2: Can I learn deep learning without learning ML first?

While technically possible, it is not recommended. Core ML concepts (bias-variance tradeoff, overfitting, cross-validation, gradient descent) are foundational to deep learning. Solidly understanding these concepts makes your transition to DL much more effective.

Q3: Do I absolutely need a GPU for deep learning?

For small models and prototyping, CPU can suffice. But for real projects and large models, a GPU is practically essential. Free options include Google Colab and Kaggle notebooks. For professional work, NVIDIA A100/H100 GPUs or cloud solutions are preferred.

Q4: What is transfer learning and why does it matter?

Transfer learning is the technique of transferring knowledge from one task to another. For example, a CNN trained on ImageNet can be repurposed for medical image analysis. This technique can reduce training time and data requirements by up to 90%, making it invaluable when working with limited data or resources.

Q5: Which field offers more job opportunities in 2026?

Both fields are in high demand. ML engineering has a broader job market as it applies to virtually every industry. DL specialization offers more niche but typically higher-paying positions, especially in NLP, computer vision, and generative AI. The ideal strategy is to build competency in both areas.

Conclusion

Machine learning and deep learning are two powerful branches of artificial intelligence. Machine learning offers a broader, more interpretable, and resource-friendly approach, while deep learning provides superior performance on complex problems with unstructured data.

Choosing the right technology depends on your project requirements: data volume, budget, time constraints, and problem complexity are the determining factors. Understanding both fields gives you a comprehensive perspective in the AI landscape.

Need help choosing the right technology for your AI project?

Ekolsoft's expert team is here to help with ML and DL solutions.