Table of Contents

- 1. Introduction: Child Safety in the Age of AI

- 2. Key AI Risks for Children

- 3. Age-Specific Recommendations

- 4. Parental Control Tools and Software

- 5. AI Literacy: Educating Your Child

- 6. AI-Powered Cyberbullying and Prevention

- 7. Deepfake Risks and Protection

- 8. AI Policies in Schools

- 9. Creating a Family Technology Agreement

- 10. Frequently Asked Questions

Introduction: Child Safety in the Age of AI

Artificial intelligence technologies are permeating every aspect of our lives, and children are encountering these technologies at increasingly younger ages. Smart assistants, recommendation algorithms, chatbots, and generative AI tools have become integral parts of children's digital experiences. While these technologies offer tremendous opportunities, they also carry significant risks that every parent must understand.

As of 2026, an estimated 78% of children aged 6-17 worldwide interact regularly with AI-powered applications. This staggering figure makes it more important than ever for parents to become knowledgeable about AI safety and take proactive steps to protect their children.

In this comprehensive guide, we will explore the practical steps you can take to protect your children from AI risks, provide age-specific strategies, and introduce the tools available to help you navigate this complex landscape.

Key AI Risks for Children

Understanding the potential risks that AI poses to children is the first step in developing effective protection strategies. Here are the most critical risk areas:

Data Privacy and Personal Information Collection

AI-powered applications collect children's usage patterns, conversations, location data, and preferences. While this data is used to deliver personalized experiences, data breaches can put children's identity information at risk. Children are often unaware of what information they are sharing and how it might be used against them in the future.

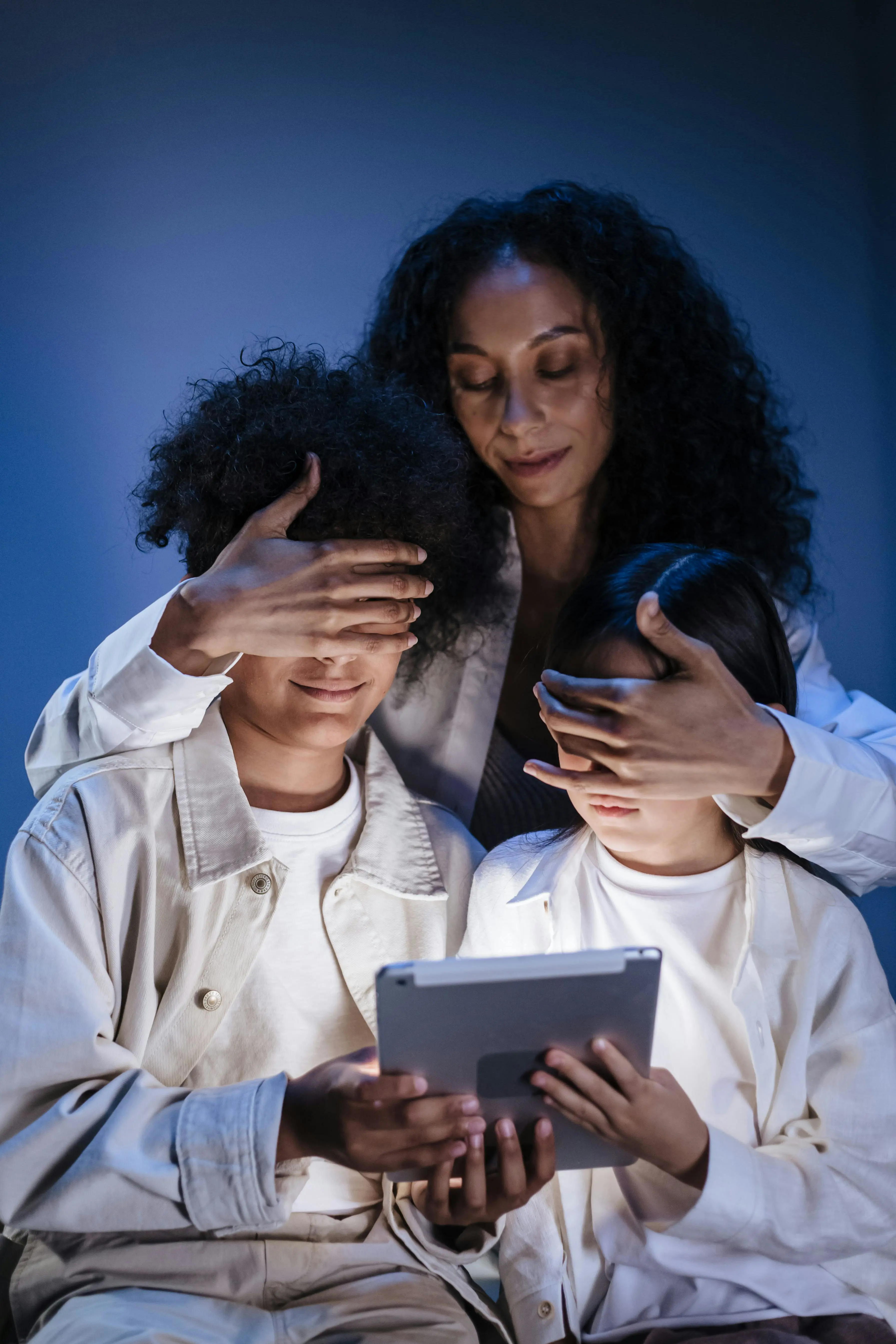

Exposure to Inappropriate Content

Generative AI tools can sometimes produce violent, sexual, or harmful content despite filter mechanisms. Children may attempt to bypass these filters out of curiosity, or encounter unexpected responses even from seemingly innocent queries. The rapid evolution of AI means content filters are constantly playing catch-up.

Algorithmic Manipulation and Addiction

AI algorithms in social media platforms and video applications are designed to keep children engaged for as long as possible. Personalized content recommendations, notification strategies, and reward mechanisms can lead to digital addiction in children. This affects sleep patterns, academic performance, and social relationships in profound ways.

Emotional Dependency and Social Isolation

AI chatbots and virtual companionship applications can cause children to form emotional bonds with artificial intelligence rather than real human relationships. This hinders social skill development and can deepen feelings of loneliness. Some children may prefer AI companions because they never disagree, judge, or challenge them — precisely the qualities that make real friendships valuable for growth.

| Risk Category | Impact Level | Prevalence |

|---|---|---|

| Data Privacy Breach | High | Very Common |

| Inappropriate Content | High | Common |

| Algorithmic Addiction | Very High | Very Common |

| Deepfake Risk | Very High | Increasing |

| Cyberbullying | Very High | Common |

Age-Specific Recommendations

Each age group interacts with AI differently, and protection strategies must be tailored accordingly. Below you will find customized guidance for each developmental stage.

Ages 3-6: Early Childhood

Children in this age group typically encounter AI through smart speakers, voice assistants, and educational apps. Key considerations for parents include:

- Always supervise interactions with voice assistants

- Use only age-appropriate, trusted educational applications

- Limit screen time to 30-60 minutes per day

- Explain to your child in simple terms that AI is a machine, not a person

- Teach them never to share personal information (name, address, school) with voice assistants

Ages 7-12: Elementary and Middle School

During this period, children begin to use technology more independently. They interact with AI more intensively through games, social applications, and educational platforms.

- Install parental control software and update it regularly

- Use AI chatbots together and discuss the responses critically

- Teach the concept of online privacy with concrete examples

- Demonstrate with real examples that AI does not always provide accurate information

- Delay social media use or supervise it strictly

- Establish clear rules about using AI for homework

Ages 13-17: Adolescence

Teenagers are the most intensive users of AI technologies. Social media, generative AI tools, games, and communication platforms are central to their daily lives.

- Establish trust-based, open communication rather than pure control

- Provide detailed information about deepfake risks and digital footprint

- Teach them to use AI tools with critical thinking skills

- Guide them on digital identity management and online reputation

- Discuss the copyright and ethical dimensions of AI-generated content

- Create a "Family Technology Agreement" together

Parental Control Tools and Software

Technology also offers powerful tools for protecting children. Here are the most effective parental control solutions available in 2026:

Device-Level Controls

Built-in tools like Apple Screen Time, Google Family Link, and Microsoft Family Safety provide robust device-level control mechanisms. These tools allow you to manage app download permissions, set screen time limits, and configure content filters without installing any third-party software.

Network-Level Protection

Router-level DNS filtering solutions cover all devices on your home network. Free services like OpenDNS Family Shield and CleanBrowsing block access to inappropriate websites. More advanced solutions offer AI-powered threat detection that adapts to new threats automatically.

AI-Powered Safety Software

Next-generation parental control software uses artificial intelligence to analyze children's online behavior. These tools can detect cyberbullying indicators, inappropriate content sharing, and risky communication patterns. Applications like Bark, Qustodio, and Net Nanny are leading solutions in this space.

| Tool | Platform | Key Feature | Price |

|---|---|---|---|

| Google Family Link | Android, iOS | App management, location tracking | Free |

| Apple Screen Time | iOS, macOS | Screen time limits | Free |

| Bark | All platforms | AI-powered content monitoring | Paid |

| Qustodio | All platforms | Advanced reporting | Paid |

| OpenDNS | Network level | DNS filtering | Free |

AI Literacy: Educating Your Child

The most effective long-term protection strategy is to build your child's AI literacy. AI literacy encompasses the skills of understanding how artificial intelligence works, being able to distinguish AI-generated content, and using this technology responsibly.

Core Components of AI Literacy

- Understanding: Grasping what AI is, how it learns, and what its limitations are

- Recognition: Being able to identify text, images, and audio generated by AI

- Critical thinking: Questioning and verifying information provided by AI

- Ethical awareness: Evaluating the ethical dimensions of AI usage

- Responsible use: Using AI tools appropriately and honestly

Home Activities for Building AI Literacy

Practical activities you can do with your child to build their understanding of AI:

- Ask questions to an AI chatbot together and evaluate the responses critically

- Play "AI or Human?" — guess whether images or texts were generated by AI

- Show with concrete examples how AI can produce biased results

- Build a simple AI project together (image classification, chatbot creation)

- Discuss AI-related news stories during family conversations

- Compare AI-generated creative writing with human-written pieces

AI-Powered Cyberbullying and Prevention

Artificial intelligence has made cyberbullying more sophisticated and devastating. AI tools have diversified the weapons available to bullies and made detection increasingly difficult for parents and educators alike.

Types of AI-Enhanced Bullying

AI-powered cyberbullying manifests in several forms that parents need to be aware of:

- Deepfake bullying: Mounting the victim's face onto inappropriate images or videos

- Voice cloning: Creating fake audio recordings that imitate the victim's voice

- Automated harassment: Mass messaging through bot accounts across multiple platforms

- AI-assisted identity theft: Creating fake profiles using the victim's identity

- Manipulative text generation: Producing personalized degrading messages using AI

Prevention and Intervention Strategies

Measures parents should take to protect their children from AI-powered cyberbullying:

- Regularly talk with your child about their online experiences in a non-judgmental way

- Learn to recognize the signs of cyberbullying: sudden mood changes, hiding devices, social withdrawal

- Teach your child evidence collection methods (taking screenshots, saving messages)

- Know how to contact school administration and, if necessary, law enforcement

- Teach your child to minimize their digital footprint

- Review social media privacy settings together on a regular basis

Deepfake Risks and Protection

Deepfake technology poses one of the most serious AI risks for children. Artificially generated fake images and video content can cause severe damage to children's reputation and psychological well-being, with effects that can last well into adulthood.

The Scale of Deepfake Threats

Today, creating deepfakes no longer requires advanced technical knowledge. Free or low-cost applications allow anyone to create realistic fake images from just a few photographs. This situation poses a particularly serious threat among school-age children, where social dynamics and peer pressure create a volatile environment.

- Reputational damage: Fake images can spread rapidly and leave permanent harm

- Psychological trauma: Anxiety, depression, and loss of self-esteem in victim children

- Blackmail risk: Fake content used to demand money or personal information from children

- Legal consequences: The creation and sharing of deepfakes carries legal ramifications

Protective Measures

- Be cautious when sharing your children's photos on social media (sharenting)

- Teach your child not to share high-resolution photos publicly

- Learn to use deepfake detection tools and teach your teen to use them

- Report any deepfake content to the relevant platform and authorities immediately

- Explain the concept of deepfakes to your child in an age-appropriate manner

AI Policies in Schools

Schools play a critical role in children's interactions with AI. Both the use of AI as an educational tool and educating students about AI safety are important components of school policies that directly affect your child's daily experience.

What to Expect from Schools

- A clear and transparent AI usage policy that is communicated to parents

- AI literacy education integrated into the curriculum

- Clear rules about using AI for homework and assignments

- Teacher training on AI safety and responsible technology use

- Protection of student data from being shared with AI tools without consent

- Intervention protocols for AI-powered cyberbullying incidents

Parent-School Collaboration

Effective protection requires parents and schools working together as partners:

- Learn about and evaluate the school's AI policy before the academic year begins

- Raise AI safety concerns during parent-teacher meetings

- Review the privacy policies of digital tools used by the school

- Stay informed about AI-related incidents at school

- Participate in parent-school collaboration committees and advocacy groups

Creating a Family Technology Agreement

A family technology agreement is a written contract that establishes shared rules for both children and parents regarding technology use. This agreement clarifies expectations, reduces conflicts, and gives everyone a reference point when disagreements arise.

Key Elements to Include

- Screen time limits: Separate limits for weekdays and weekends

- AI tool usage rules: Which tools can be used and for what purposes

- Privacy rules: Information that should never be shared online

- Social media rules: Which platforms are allowed and sharing boundaries

- Incident reporting: What to do when encountering disturbing situations

- Consequences: Fair sanctions for rule violations that are agreed upon in advance

- Parent responsibilities: Rules that parents also commit to following

Frequently Asked Questions

When can my child start using AI tools like ChatGPT?

Most AI tools' terms of service target users aged 13 and above. However, regardless of age limits, it is recommended that you teach the fundamentals of AI literacy before allowing your child to use these tools and that you use them together initially. For children under 13, using AI tools under parental supervision for educational purposes is the safest approach. Focus on building critical thinking skills first.

What should I do if AI is doing my child's homework?

First, stay calm and adopt an educational approach rather than punishment. Discuss with your child how relying on AI affects their learning process and long-term development. Instead of completely avoiding AI for homework, teach them to use it as a research and brainstorming tool rather than a substitute for their own thinking. Contact the school to learn their AI usage policy and set home rules accordingly.

How can I monitor my child's online safety while respecting their privacy?

Transparency is key. Openly tell your child which monitoring tools you are using and why. Gradually reduce the level of supervision as they grow older and demonstrate responsibility. Instead of reading daily messages, prefer tools that monitor general usage patterns and flag concerning behavior. Emphasize that the goal is safety, not control, and that trust is earned incrementally.

What should I do if I find deepfake content of my child?

Act immediately: take screenshots or recordings of the content as evidence, use the platform's reporting mechanism to request removal, and file a report with law enforcement. Explain the situation to your child in an age-appropriate way and seek professional psychological support if needed. Document everything including timestamps and URLs for any legal proceedings that may follow.

How can I recognize the negative effects of AI on my child?

Signs to watch for include: disruption in sleep patterns, decline in academic performance, loss of interest in social activities, excessive reactions when device use is interrupted, avoidance of real-world activities, and signs of anxiety or depression. When you notice these signs, first have an open conversation with your child without accusation, and consult a child psychologist if the behaviors persist or intensify.

What are the three most important steps to ensure my child interacts safely with AI?

First, establish open communication: have regular, non-judgmental conversations with your child about technology and their online experiences. Second, develop AI literacy: learn together about how artificial intelligence works, its limitations, and its risks. Third, grant gradual freedom: use age-appropriate control mechanisms while teaching your child to take responsibility, increasing their autonomy as they demonstrate good judgment and maturity.

]]>